What Leaked Documents Taught Us About Google's 14,000 Search Ranking Features

Spoiler alert!

Don’t read any further if you don’t want to be spoiled by these new leaks.

We made that mistake with Game of Thrones…

Jokes aside, here’s the scoop.

Back in May, Rand Fishkin, the Batman of the SEO community, released a set of leaked documents that contained information on more than 14,000 Google Search ranking features.

That’s right. 14,000.

How many can you name?

Released in the name of transparency, these 2,500 pages of API documents contain 14,014 attributes spread across 2,596 modules. Both Fishkin and iPullRank’s Mike King participated in decoding this documentation to reveal valuable information to the SEO community.

In the SEO community, we refer to this as “based.”

You probably don’t have the time or interest to wade through this entire documentation series – but don’t worry, we did! And we’ve come up with a perfectly digestible version for you to peruse at your leisure.

Let’s dig in!

The Big Findings

Within the documentation, each of the nearly 2,600 modules is broken down into summaries, types, functions, and attributes, with “attributes” defining characteristics or properties of a module that have an impact on search engine results page (SERP) rankings. However, attributes do not have weight scores, which is interesting.

Unfortunately, the lack of weight metrics creates a murky environment. We don’t know how important these attributes are, independently or with respect to each other, and we also can’t say for sure whether any of these attributes have since been retired. Some features are deprecated, and others contain notes that suggest they should no longer be used – but we don’t have the information necessary to confirm whether these advisements were followed.

The code associated with these documents is fairly recent; it was released March 27, 2024, so it should still be considered mostly, if not totally relevant.

Here are some major findings from Fishkin and King:

- Google lies (and domain authority is real). Yes, we all suspected it. But this documentation all but proves that Google has knowingly lied, misled, or misdirected us on multiple occasions. For example, Google authorities have, on multiple occasions, insisted that they don’t use “anything like domain authority” as part of their search rankings. Even John Mueller has said, “we don’t have website authority score.” However, there’s a critically important feature in the documentation called “siteAuthority” that seems to be… well, another name for domain authority. Unfortunately, there’s still a lot we don’t know about how this attribute works and the specifics of its application. But it’s an interesting and valuable finding, nonetheless, providing us with some reassurance that ignoring Google’s gaslighting and doubling down on domain authority optimization were good moves.

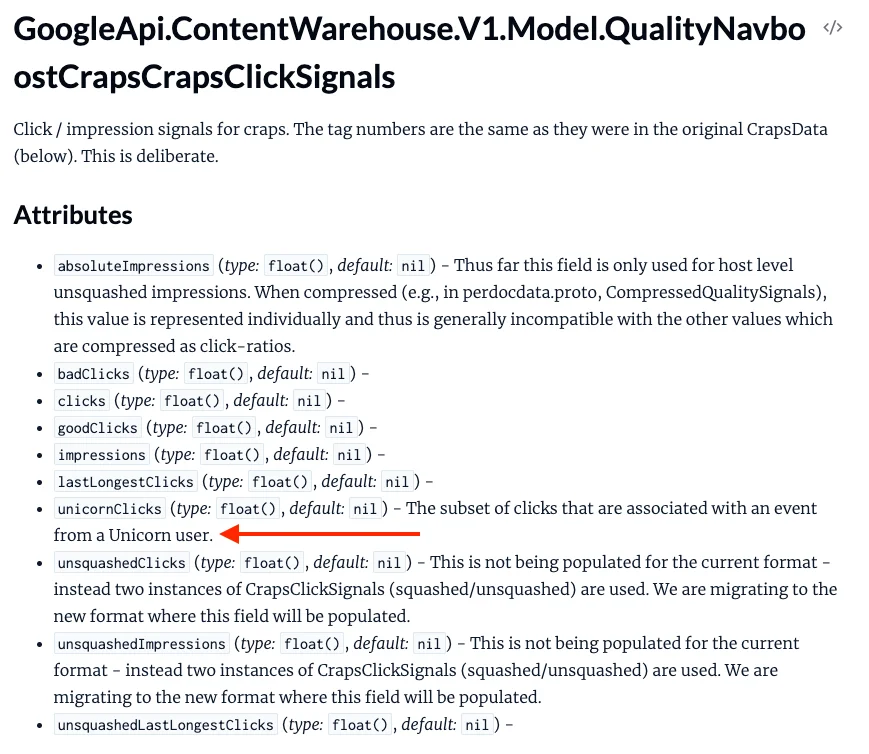

- Click data matters. Google also seems to pay close attention to “click signals.” In fact, there are several modules in the documentation that showcase features related to clicks, such as:

o goodClicks

o badClicks

o lasLongestClicks

o unsquashedImpressions

o squashedImpressions

o squashedClicks

o unsquashedClicks

o Unicorn clicks

It’s hard to say exactly what these mean (especially “Unicorn clicks,” which we’re very intrigued about). However, knowing that click data is relevant is a major finding. Fishkin also said, “Google has three buckets/tiers for classifying their link indexes (low, medium, high quality). Click data is used to determine which link graph index tier a document belongs to.”

- Chrome data is relevant. Unsurprisingly, Google takes volumes of data from Google Chrome browsers for generating search rankings. For example, one attribute known as chromeInTotal measures Chrome views at the site level. Though there is some ambiguity here, Fishkin speculates that Google uses the number of clicks on pages in Chrome browsers as a way to determine the most important and most popular URLs within a given domain.

- Certain domains are whitelisted. Certain attributes, like “isCovidLocalAuthority” and “isElectionAuthority,” seem to suggest that particular websites are whitelisted for controversial queries. Because of this, even websites that don’t have strong domain authority or conventional ranking factors can rank highly for queries relevant to them. We suspect this will also impact how Google's AI in search will filter misinformation as well. While this is ostensibly intended to combat misinformation and propaganda, it indicates a troubling amount of influence and control that Google has over the selection process. For some controversial queries, Google plays the role of arbiter in deciding who can be trusted and who can’t. Of course, if you inherently trust Google to always make the right calls, you may consider this a good thing.

- Human evaluators have influence. In an era increasingly reliant on AI and automation, it’s reassuring to know there are still some humans behind the scenes. According to the documentation, human evaluators play some role in assigning quality raters, although it’s not entirely clear how this works or how much of a role it plays.

- Twiddlers. “Twiddlers,” apparently, are re-ranking functions that serve like afterburners that engage after the primary search algorithm has run its course. You can think of them as mini filters that make adjustments to results before they go to the user directly. According to King, “Twiddlers can adjust the information retrieval score of a document or change the ranking of a document… Twiddlers can offer category constraints, meaning diversity can be promoted by specifically limiting the type of results. For instance the author may decide to only allow 3 blog posts in a given SERP. This can clarify when ranking is a lost cause based on your page format.”

There’s a lot more to explore in the initial post, so we encourage you to read it in full if you have the time. Also, decoding and analyzing these documents is an ongoing project, so you can expect more updates in the near future.

Key Takeaways for You

If you’re looking for some takeaways for your own SEO strategy, these are the best we’ve got:

- Content quality is evaluated somewhat simply. Ever since the Panda update, SEO professionals have had a lingering fear that content quality was evaluated in some super secret, super complex, opaque way. But now, it seems that Panda – and content quality evaluations in general – was pretty simple. Google primarily scores content quality based on the engagements of users; earning lots of links and indicators of positive behavior like time spent on page are incredibly valuable.

- Your brand name matters. In the SEO game, we often preoccupy ourselves with chasing backlinks in pursuit of higher domain authority and targeting the exact right keywords to earn more organic traffic. But it seems like the reputation and impact of your brand name, even outside of Google Search and digital spaces, play a major role in your search engine success. Companies should spend just as much time building their reputations as they do deliberately trying to increase their search rankings.

- Authorship matters. We already knew this, but these leaked documents reinforce the idea that authorship matters. Google pays very close attention to authorship and consistently determines whether on page entities are the author of that page.

- Links are still crucial. We've said for a long time that backlinks are the heart of SEO, and these leaked documents confirm that links are still extremely important. Google's indexing activities sort websites into tiers, with higher tiers associated with much more valuable links. Earning more valuable links more frequently can help any website propel themselves into higher tiers. Also, Google takes link spam very seriously, and carefully measures link velocity to assign penalties.

- Content freshness requires vigilance. Google has gone on record to say that the freshness, or newness of content makes an impact. But we didn't know just how important it was until now. It seems there are many different attributes related to freshness, meaning that it's more important than we realized to periodically update your best website content. Even small changes can help ensure your content continues to be evaluated as fresh and continues to rise in search rankings.

- There’s still a lot we don’t know. For better or worse, this seems to only be the beginning. The search documentation hasn't been fully decoded or fully analyzed, and we have no way of knowing for sure whether these documents are truly comprehensive. There's a lot more we still need to learn, so preserve your mindset of uncertainty in pursuit of better SEO results.

- Transparency is still limited. While the Google document leak sheds some light on Google’s ranking algorithm, there’s still much left to speculation. Even the insights we’ve gleaned from inside Google’s search division only scratch the surface of the processes that power organic search rankings. Publications emphasize the importance of maintaining a robust search engine optimization strategy centered on high-quality content, but the secrecy maintained by Google employees suggests there’s always more complexity than meets the eye. For now, SEO professionals must stay agile and adapt to the evolving landscape while continuing to learn from any revelations.

You don't have time to read about 14,000 search features.

But we do. In fact, we kind of like it.

And it's that commitment to SEO that sets us apart from our contemporaries. We do whatever it takes to stay on top of the latest developments in the industry and bring our clients the results they want.

So are you ready to take the next step in your SEO journey?

We can't promise we're going to perfectly apply all 14,000 search features to your website. But we can promise to provide you some of the best quality services in the SEO world, from technical SEO to content development and backlink building.

We’ll also leverage insights from leaked Google documents and internal documentation to help you understand how Google's algorithm impacts your site’s ranking and site authority score. By optimizing everything from page titles to internal links, we’ll ensure your website performs at its best in the search results—setting a new standard in the SEO industry and the broader marketing world.

If you're ready to take the next step, or if you just want to learn more about our process, reach out today!

.svg)

.jpg)

.jpg)

.jpg)